Noise2Void¶

Overview¶

Noise2Void [1, 2] is a self-supervised denoising method. It trains by randomly masking pixels in the input image and predicting their masked value from the surrounding pixels.

N2V relies on two fundamental hypotheses:

- The underlying structures are smooths (i.e. continuous)

- The noise is pixel-wise independent (i.e. the noise in one pixel is not correlated with the noise in a neighboring pixel)

The corollary from these hypotheses is that if we consider the value of a pixel, $x$, as being the sum of the true signal, $s$, and a ceertain amount of noise, $\epsilon$, such that $x = s + \epsilon$, then:

- The true signal value $s$ can be estimated from the surrounding pixels

- The noise $\epsilon$ cannot be estimated from the surrounding pixels

Therefore, in cases where the hypotheses hold, if a pixel is masked, its noise cannot be estimated from the surrounding pixels, while the true signal can. This is exactly how N2V is trained!

Training Noise2Void¶

Noise2Void is self-supervised, meaning that it trains on the data itself. It relies on a classical UNet architecture [4], and applies a special augmentation called N2V manipulate that replaces the value of randomly-selected pixels with the value of one of their neighbours (see the left column in the next figure).

During training, the output of the network is compared to an image that is only comprised of the original pixels that were obfuscated during the augmentation. The loss is only computed on these obfuscated pixels, which means that the network is trained specifically to predict the masked pixels values!

Below you can see two examples of the input to the network (left) and the masked pixels used to compute the loss (right). The first example is a toy image that allows visualizing the pixel replacement (it masks $2\%$ of the pixels). The second example is a realistic image with the default N2V pixel manipulation parameters (e.g. $0.2\%$ of masked pixels).

Fig 1.: Noise2Void masking scheme. Top row: a toy example showcasing the pixel value replacement of masked pixels (left), and the resulting mask used for supervision (right). The mask contains the original values of the masked pixels. Bottom row: realistic example with the default masking parameters (data from 3). CC-BY.

Interpreting the loss¶

As described above, the loss is computed by calculating the mean squared error over the masked pixels:

$$loss = \dfrac{1}{N_{masked}}\sum_{i, masked}(x'_{i}-x_{i})^{2}$$

where $N_{masked}$ is the total number of masked pixels, $x'_{i}$ is the network prediction for the masked pixel $i$, and $x_{i}$ is the original noisy value of pixel $i$.

As opposed to supervised approaches, where the loss is computed with respect to a known ground truth, N2V computes the loss based on a noisy signal. At the beginning of the training the network usually learns within a few epochs an approximation of the structures in the image and the loss decreases sharply. Then, it rapidly reaches a plateau and oscillates around a particular loss value.

Because the loss is computed between prediction and noisy signal, its absolute value is not informative for whether the network is properly trained. Likewise, oscillation on the plateau does not indicate that it does not learn anymore.

The best way to assess the quality of the training is to look at the denoised images, potentially at different points during training, using the checkpoints. In practice, we often simply train long enough for the image to look properly denoised!

If the training loss is not too informative, what about the validation loss? It is basically the same. If your validation loss increases, however, that does mean you are overfitting and might need to add more images to the training data.

Predicting on the training set¶

We are usually told that training and validation images should not be used to assess the performance (even qualitatively) of the network, because it was trained specifically to perform well on them.

With Noise2Void, however, the network is trained without biasing it towards a ground-truth as it is trained on noisy pixels only. Therefore, it is perfectly fine to predict on the training images.

Re-using a trained model¶

Noise2Void learns both the noise distribution in the image and a structural prior, that is to say how the structures in the image look. As with most deep learning approaches, if you try applying a trained neural network on images that are different from the training set, the network will most likely fail and produce results of lesser quality.

This will happen with N2V, for example, if the noise distribution is different in the new images, or if they contain different structures. Fortunately, N2V is quick to train, so you can train a single network for each new experiment!

Why do N2V predictions sometimes look blurry?¶

The absence of noise can by itself make images look slightly blurry. If some regions of your data look unreasonably blurry, you might be encountering the a case of regression to the mean.

For each noisy image, we often say that there is a whole distribution of possible denoised images. In particular, for strongly degraded images, even different structures can produce the same noisy patch.

Because of this, a network trained with the mean squared error, such as N2V, will tend to predict the average of all possible denoised images. This is why the prediction can often look blurry and washed out. For approaches that predict single instances from the distribution of possible denoised images, check out DivNoising or HDN.

Assessing quality of results¶

Denoising is a difficult task to assess quantitatively. The most common and more accurate way to estimate the performances of a network is to compare its output with a ground truth image. That means that you need to acquire images with and without noise. This can be done by acquiring with more laser power, longer exposure time, or by averaging multiple images. That is, however, cumbersome and often impossible.

There are other ways perform controls on the quality of the denoising.

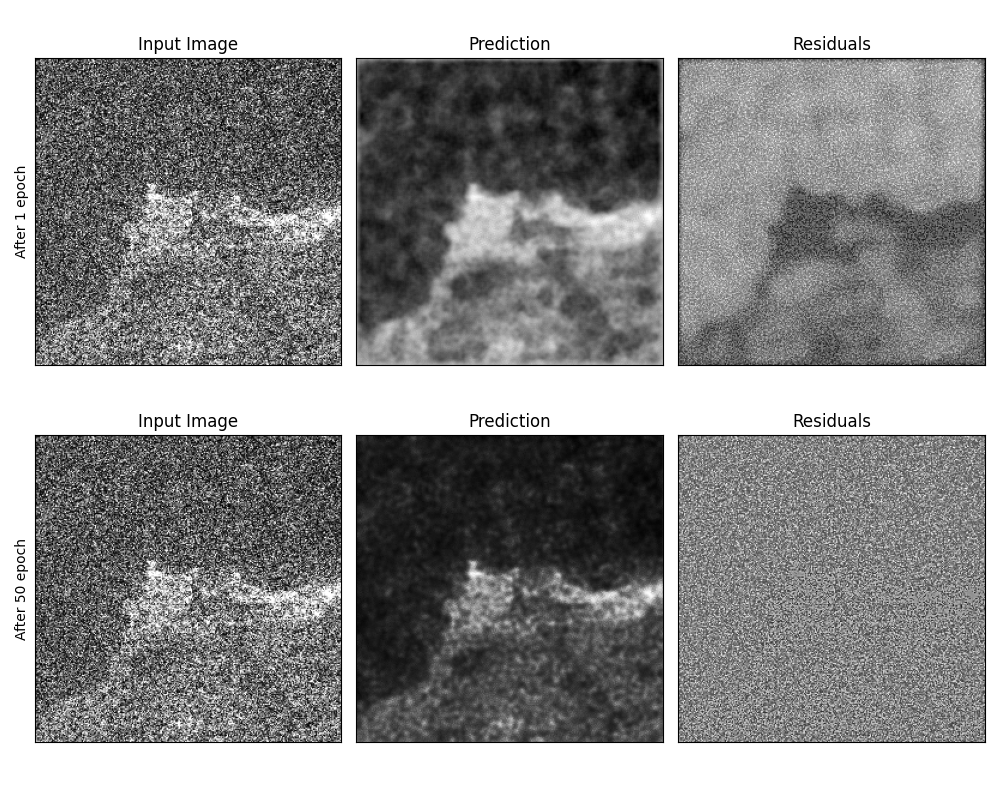

Quality assessment by inspecting the residuals¶

The residuals are the pixel intensity removed from the original image by the network:

$$res = image - pred$$

They correspond to the subtraction of the prediction from the original image. If the residual show details of the image structure, then the training went wrong or the network did not train for long enough.

Fig 2.: The residuals are a good indicator of successful training. Top row: input image (left), prediction (center) and corresponding residuals (right) after one training epoch. The residuals show structures. Bottom row: same after 50 epochs, there is no visible structure that is not pure noise. CC-BY.

Note that in the presence of Poisson noise (a.k.a shot noise), the amplitude of noise scales with the square root of the signal. Therefore, the residuals can still be modulated by the structures in the image, especially the bright ones. Please note that a property of the residuals is that the sum of its pixels should always be close to zero: even if some areas of the residuals look brighter, on closer inspection, you should see a lot of very dark pixels in this very region.

Assessing quality by training multiple networks¶

As Noise2Void training is stochastic, in order to assess whether structures are really in your image, you can perform different types of experiments:

- Split your images in several subsets and train independent networks on each of them. Compare the results.

- Train multiple randomly initialized networks on your training data and compare the results.

Limitations¶

Pixel-wise independent noise¶

It might happen that the noise in your images is not pixel-wise independent. In this case, the network can learn the amount of noise in the masked pixels from its neighboring pixels. Noise2Void might then introduce small artefacts or simply reinforce the correlated pattern in the denoised image.

Correlations in the noise can sometimes be observed with sCMOS camera or point-scanning microscopy methods. To estimate whether the noise is correlated, one can perform image autocorrelation and inspect the shape of the central peak in the distribution. The next figure shows two examples of the same image with artificial Gaussian noise. In the second one, we introduced a correlation in the Gaussian noise, leading to an horizontal line in the autocorrelation image.

Fig 3.: Structured noise. Top row: image with uncorrelated noise (left) and its autocorrelation (close-up on the zero-shift component). Bottom row: same images with correlations introduced in the noise component. CC-BY.

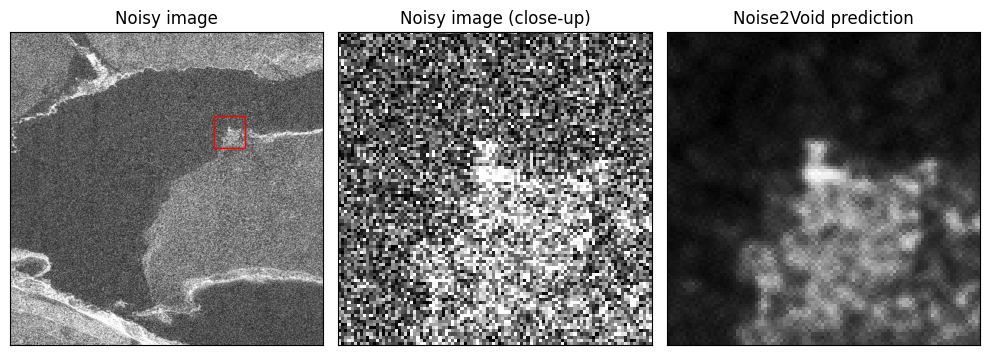

Checkerboard artefacts¶

Checkerboard artefacts [5] sometimes occur with Noise2Void. This effect is most prominent in the presence of salt and pepper noise or hot pixels in sCMOS cameras. They are characterized by small cross patterns in the denoised image (see figure 4). To reduce the presence of these artefacts, you can use N2V2.

Fig 4.: Checkerboard artefacts. The figure shows a noisy image crop (left), a noisy close-up (center) and the corresponding Noise2Void prediction (right). While the denoising was effective, a distinctive checkerboard pattern nonetheless appears in the Noise2Void prediction. CC-BY.

References¶

[1] Alexander Krull, Tim-Oliver Buchholz, and Florian Jug. "Noise2Void - learning denoising from single noisy images." CVPR, 2019. link

[2] Joshua Batson, and Loic Royer. "Noise2Self: Blind denoising by self-supervision." MLR, 2019. link

[3] Tim-Oliver Buchholz, Mangal Prakash, Deborah Schmidt, Alexander Krull, and Florian Jug. "Denoiseg: joint denoising and segmentation." ECCV, 2020. link

[4] Olaf Ronneberger, Philipp Fischer, and Thomas Brox. "U-net: Convolutional networks for biomedical image segmentation." MICCAI, 2015. link

[5] Eva Höck, Tim-Oliver Buchholz, Anselm Brachmann, Florian Jug, and Alexander Freytag. "N2V2 - Fixing Noise2Void Checkerboard Artifacts with Modified Sampling Strategies and a Tweaked Network Architecture." ECCV, 2022. link